Dealing with Moral Multiplicity

First written: 28 Dec. 2013; last update: 15 Nov. 2017

The ethical views we hold depend significantly on the network structures of our brains: which ideas are associated with which valences and how strongly. These feelings and weights are shaped by our genetic predispositions, cultural circumstances, and life experiences. Had you developed differently, your moral views would have been different. It's up to us whether we want to update based on this fact to move toward the moral views of others, or whether we want to retain our own happenstance views and merely regard convergence as instrumentally valuable for reasons of compromise. This piece also discusses moral nihilism, natural selection of moralities, and an associative-network model of moral reasoning.

Contents

The feeling of morality

Scientists debate the specific evolutionary processes that gave rise to humans' and animals' moral sensibilities, but the original functional purpose of morality is less ambiguous: Morality was a set of community norms that served to enforce fair play. Maintaining these standards allowed a tribal group to overcome prisoner's dilemmas and thereby achieve higher total fitness than if anarchy prevailed. Deviations from these agreements would be punished, and concordance would be rewarded.

This explains why norms existed, but what would be the reason for people to feel like these norms were more than arbitrary rules that they had to follow only as long as they wouldn't get caught? Why would people attach a sense of higher, objective rightness to them? One standard story to explain this is that those people who felt the community norms were objectively right would follow them in all circumstances, including when they thought they weren't being watched. Then, in the rare occasions when those people were actually being watched, those who followed the rules universally would fare much better. Analogously, I think organizations that follow common-sense ethics are more successful in the long run than organizations that scheme in private and eventually are exposed in a scandal.

We can also observe that communities in which people felt that morality was universal would have had higher total fitness due to cooperating on prisoner's dilemmas even when enforcement wasn't possible, but this explanation invokes group selection. While group selection at the gene level may be dubious because the right mutation would have to happen in many people at once, group selection at the meme level might be more plausible if the meme can spread to most members of the group at the same time. Memes are more like diseases than individual mutations.

Regardless, it's clear that people do have a sense of ethics that's distinct from their other preferences. The idea that "Murder is wrong" feels different from the idea that "I like chocolate." Our brains inform us of the separation between selfishness vs. adherence to group norms, even though our actions are ultimately a mixture of the two impulses.

Shaping of moral brains

Just as toddlers learn that sharp things hurt when you stick them in your arm and that vegan ice cream is yummy, so they also learn that you shouldn't hit people and slavery is immoral. The first types of lessons mostly come directly from their hedonic systems, while the latter mostly come from social inculcation and hence lodge in a conceptually distinct region of the brain devoted to "conscience" topics. Indeed, there are times when people learn lessons about their own hedonic wellbeing -- e.g., don't drive without a seat belt -- and perhaps because these are learned through social norms rather than direct hedonic reaction, the lessons tend to go in the abstract, conscience-type mental regions. It feels similarly to force yourself to do what you know is in your own long-term interest as to force yourself to do what's good for others; in both cases, the brain doesn't necessarily have direct hedonic learning to make the decision quick and facile. Of course, there are many moral/political issues where people feel passionately rather than just obeying their consciences against other desires. Perhaps in these cases the issue has become sufficiently learned in a hedonic sense that it doesn't require cognitive control to be followed.

Moral principles feel more objective when they've seemed more universal in your development. For instance, in modern Western countries, almost everyone agrees that human slavery is wrong, so people have a strong sense that this is a clear, unquestionable moral fact. In contrast, there's debate over whether it's right for gays to get married, whereas hundreds of years ago, gay marriage would have been unanimously seen as wrong in many cultures and so its wrongness would have also seemed an obvious moral fact to many people. And a few centuries from now, embracing gay marriage will probably be seen as obviously right.

As people learn of cases where other societies had different cultural norms, they sometimes relax their feelings of absolute objectivity. For instance, some may say that it wasn't wrong for the Aztecs to perform human sacrifices, because this was their culture, whereas it would be wrong to do sacrifices in our culture. Moral relativism is one response to disruption of apparent universality of a moral principle. Of course, some incline more toward it than others, partly depending on how much other people in one's milieu endorse it. If you put a random person in an anthropology department, he's more likely to become morally relativistic when you remind him about Aztec sacrifices than if you put him in a Baptist church.

Changes in moral outlook are subject to the laws of brain plasticity just like the hedonic system or other brain systems, and flexibility tends to decline with age. Usually, the more people hear a certain view and spend time with others who espouse it, the more they adopt it themselves, unless they actively apply a negative gloss onto the messages so as to negate their valence. (Think of people saying "Boo! Hiss!" when they hear a politician of the opposing party.) Many moral views come from associating a brain region for a concept (say, animal farming) with a brain region that already has strong moral valence (say, slavery), thereby glossing the concept with that same valence. Why is abortion wrong? Because it's murder (which has strong existing negative valence). Why is abortion acceptable? Because it's a woman's choice about her body (invoking strong positive valence associated with individual autonomy). Why is gay marriage wrong? Because it destroys the sanctity of marriage and the family (which have strong existing positive valence). Why is gay marriage right? Because gays deserve equality, and allowing gays to marry would strengthen the institution of marriage, not weaken it (strong positive valence). And so on. See the "Appendix: Ethical reasoning using associative networks" for one model of how this smearing of valence might work.

Associations account for some types of moral reasoning (e.g., "X is stealing. Stealing is wrong. Therefore, X is wrong."). As Joshua Greene would claim, other types of moral reasoning are slower, more calculated, and more consequentialist. For instance, consider the moral dilemma of whether you should suffocate a crying baby if this is the only way to avoid your whole congregation, including the baby, from being heard and killed by armed guards. Our immediate associative response is "suffocating babies is bad," but a more nuanced response, noting that the baby would die anyway, may be different. Still, the reasoned response too ultimately relies on propagating valence from certain ideas (e.g., "saving more lives is better") back to conclusions ("it's better to suffocate the baby in this particular case"). Over time, if these reasoning chains are encountered often, neural shortcuts develop, and then moral calculation is no longer required. In any event, calculated moral reasoning is not necessarily "better" than associative reasoning, although some communities might claim it to be better -- ironically, based on the positive associations that they have with calculated reasoning!

Moral nihilism?

Our ethical views would be different if we had grown up differently. Genes play a big role, as does early childhood development. Even immediate factors can modify our outlooks from moment to moment. Paul Christiano notes that blood sugar has an effect. We're also randomly influenced by being tired, being alone vs. with friends, what movie we watched yesterday, and countless other seemingly trivial forces. When we think in naive terms about the grand system of morality, it feels like incidental, random occurrences in our past shouldn't be so influential, or even influential at all, on what we judge is right or wrong?

If I feel X, but I could have grown up to feel Y, and other people feel Z, then isn't it all pointless? This is the perspective of moral nihilists, and I think fear of nihilism is one common motivation for holding on to moral realism, if only by confused Pascalian reasoning, in the face of the ultimate arbitrariness of our moral sentiments. Of course, nihilism contradicts itself: If there are no objective standards of judgment, then it's not even true to say that "morality is pointless" because there's no such thing as something being pointless or not. Still, this doesn't trouble the persistent nihilist, who can simply refuse to engage in this conversation any further because it's all meaningless -- whatever that's supposed to mean.

When people think a moral statement is objective, they usually feel like, "I just know ABC is right. It can't possibly be otherwise." Then, when we imagine ourselves growing up somewhat differently and coming to hold a different view due to changed circumstances in genes or development, we still project our self-identity onto that different person, and then we realize that, "I could have not felt that ABC was right. Hmm. Maybe it's not The Right Thing after all." This causes the "morality feeling" in our brains to shut down, and our once noble moral impulses begin to look more like egoistic desires. The "magic" of absolute rightness fades away.

I think this is a failure mode that we can avoid. We should recognize what's going on, yes, but why let ourselves turn off the magical moral feeling in our brains? Why not keep it, if it makes us feel better and is more consistent with our desires anyway? We can value the magic moral feeling as though it were still magic if we choose, and I choose to do so. It's not Wrong to feel the magic even after understanding what's under the hood, and more than it's Wrong to still like chocolate after learning about pleasure glossing in the ventral pallidum and realizing that you could have been wired to enjoy the taste of elephant dung instead.

Arbitrary genes and personal experiences may make you choose different values than someone else, but without any genes or personal experiences, there would be no "you" at all. In some sense, the arbitrariness of one's genes and history is essential to ethics; it's not necessarily a bias that we should try to eliminate. Without your own arbitrary initial conditions, you might instead be a paperclip maximizer (insofar as you would still be "you" at all if you had such radically different moral beliefs).

Compromise as a new objective morality?

Even if different groups have different moral goals, they'll find it advantageous to compromise in positive-sum ways, such as cooperating on moral versions of the prisoner's dilemma. This may lead to somewhat convergent outcomes insofar as compromise aggregates different views roughly in proportion to bargaining leverage.

The evolutionary motivation for the feeling of objective morality at the individual level was that it was a form of pre-commitment to following group norms based on cooperation. By the same token, we could imagine feeling that a stance which aggregates different moral views into a unified, compromise moral view is "objective," giving us magic moral-glow feelings. This would be a second layer of aggregation: First there were egoistic desires that got aggregated to moral tribal norms, and then the remnants of those tribal norms get aggregated to meta-level worldwide norms. Of course, most of the motivations in the world are not moral norms but still regular egoism, so base-level egoism would have significant sway over the compromise morality as well.

What do I think of this proposal? I like the fact that feeling the glow of objective morality can motivate people to compromise, and this might be a useful intuition pump. On the other hand, because compromise is weighted by power, it feels unfair to the weak and voiceless, including all non-human animals, whose only representation comes from the extent to which other humans happen to sympathize with them. Of course, we can't ask for anything better. Usually a power-weighted compromise is the best possible outcome for achieving whatever goal we want in expectation. One of the lessons of Robert Axelrod's tournaments on the iterated prisoner's dilemma was "don't be envious." Still, I'm not comfortable moving my moral compass toward feeling like "might actually makes right" rather than just feeling that "power-weighted compromise is the best outcome we can hope for given the circumstances."

What about reflective equilibrium?

Reflective-equilibrium views aim to resolve ethical disagreements into a self-consistent whole that society can accept. One example is Eliezer Yudkowsky's coherent extrapolated volition (CEV), which would involve finding convergent points of agreement among humans who know more, are more connected, are more the people they wish they were, and so on.

A main problem with this approach is that the outcome is not unique. It's sensitive to initial inputs, perhaps heavily so. How do we decide what forms of "extrapolation" are legitimate? Every new experience changes your brain in some way or other. Which ones are allowed? Presumably reading moral-philosophy arguments is sanctioned, but giving yourself brain damage is not? How about taking psychedelic mushrooms? How about having your emotions swayed by poignant music or sweeping sermons? What if the order of presentation matters? After all, there might be primacy and recency effects. Would we try all possible permutations of the material so that your neural connections could form in different ways? What happens when -- as seems to me almost inevitable -- the results come out differently at the end?1

There's no unique way to idealize your moral views. There are tons of possible ways, and which one you choose is ultimately arbitrary. (Indeed, for any object-level moral view X, one possible idealization procedure is "update to view X".) Of course, you might have meta-views over idealization procedures, and then you could find idealized idealized views (i.e., idealized views relative to idealized idealization procedures). But as you can see, we end up with infinite regress here.

I like to picture our moral intuitions like a leaf blowing on the sidewalk. Asking "What do we really want?" is like asking, "In what direction does the leaf really want to blow?" Another analogy for our moral intuitions is a board game, like Chutes and Ladders: Roll the dice of environmental stimuli, move forward some amount, and maybe be transported up or down by various forces.

Sometimes you might reach an absorbing state of reflective equilibrium, but there are many such absorbing states you could fall into. The absorbing states you'd reach studying philosophy are different than those you'd reach studying biology, which are different from those you'd reach studying international relations. Each field changes your brain in different ways. And there may be path dependence due to early neural plasticity that becomes less flexible over time. One analogy people sometimes give is with sledding on fresh snow: After you've carved out certain paths, it can be harder to make other paths rather than falling back into the original ones. Of course, with artificial brains, we could reset the initial conditions (undoing the sledding marks) if desired.

And whom do we extrapolate? CEV suggested all humans on Earth, but why stop there? Why not ancestral humans on the African Savanna? How about whatever intelligent life would have evolved if the dinosaurs had not gone extinct? Aliens? Beings in other parts of the multiverse? Wacky minds that never evolved but could still be constructed, like pebble sorters?

Maybe one answer is: Let's try a bunch of initial conditions and extrapolation methods and see if some attractors are more common than others. Yes, convergence is not unique, but we might still be able to rule out a lot of moral stances as not stable. Maybe we could weight among the stable attractors that remain, although the relative numerosity of different attractors would themselves depend on the parameters for the process. For instance, if we started with arbitrary initial minds, we might find that suffering increasers were about as numerous as suffering reducers, while if we started with initial minds weighted by their prevalence as evolved creatures, we would have mostly suffering reducers in the end because suffering reduction should be a common tribal norm across a broad array of societies.

Another trouble is that CEV as presented seems to require intelligent agents that can reason about ethics, have discussions, hear arguments, and reflect on new experiences. This rules out a large chunk of non-human animals from the process. Are we going to neglect them even though they have emotions and implicit desires just like humans do? I would hope not, but convincing others of this would itself be a challenge.

As with compromise morality, I like the fact that CEV provides an intuition pump in favor of cooperation, which is good for all of us. However, as with compromise morality, I'm not sure I would label it as what I actually want, rather than just as an admirable goal to work toward because it's something people can agree on rather than fighting each other.

Moral imprinting?

It's a common observation that older people rarely change their minds, except maybe on topics that they haven't thought about much. To some extent this ossification of beliefs is rational. For example, a Bayesian approach to the sunrise problem will show smaller and smaller updates for the probability that the sun rises tomorrow as the number of data points increases. Relatedly, some machine-learning algorithms use learning-rate decay over time so that later information causes less of an update than earlier information. In other cases, stubbornness by older people is counterproductive.

A few people show belief plasticity throughout their lifetimes. One example might be Hilary Putnam: "Putnam himself may be his own most formidable philosophical adversary.[8] His frequent changes of mind have led him to attack his previous positions." But it seems that most people maintain their general stances on issues throughout their careers. (Thanks to Simon Knutsson for this point.)

The same often applies in the field of ethics. My informal experience has been that it's almost impossible to change the moral views of someone over age ~25 (plus or minus a few years), while many younger people are more "easy come, easy go" on moral issues. Once again, there are a few exceptions, like when Peter Singer switched from preference to hedonistic utilitarianism in his 60s(?). My anecdotal experience that people's views become more rigid by their late 20s is consistent with the following passage from SAMA (2013):

Though teens may change clothes, ideas, friends and hobbies with maddening frequency, they are developing ideas about themselves, their world and their place in it that will follow them for the rest of their lives. Adults may spend years trying to create or break even the simplest habit, yet most adults find that their most profound ideas about themselves and the world were developed in high school or college. This is because, by age 25 or so the brain is fully developed and building new neural connections is a much slower process.

In my own case, the core of my moral view (namely, the overwhelming importance of reducing extreme suffering) was set by age 19, and the moral updates I've had since then have been either tweaks around the edges or revisions forced by an ontological crisis, such as the realization that consciousness is not an objective metaphysical property.

It's possible that there's a sort of critical period for morality in which people "imprint" on various moral views. Or maybe the ossification of people's moral opinions with age is just a byproduct of the general ossification of people's minds about all sorts of topics. Either way, the fact of ossification presents a problem for moral idealization procedures, since even if we learn more, many of our views may already be substantially fixed. Meanwhile, if we allow for simulation of alternate possible development trajectories that we might have taken since birth or childhood, then our final conclusions in those simulations are likely to be fairly sensitive to what ideas we're exposed to first versus what ideas we only discover after substantial ossification has already taken place.

If the formation of moral views is more like sexual imprinting than it is like understanding math, then it seems unlikely that humanity would converge on a shared moral vision via moral idealization, in a similar way as it's unlikely that billions of people raised in different environments would all agree on what specific physical features of a potential mate are sexually attractive if they could just learn and discuss more with one another. Of course, there are many features of physical attractiveness that are mostly universal among humans, just like some moral judgments are mostly universal among humans.

Some moralities survive better

Humans hold roughly accurate factual beliefs (at least on concrete, day-to-day matters) because those whose brains led to incorrect views were more likely to fall off cliffs, eat poisonous berries, or fail to acquire mates. As society becomes increasingly complex, those agents that display greater rationality toward the aim of acquiring power tend to survive better. Note that this is not always the same as epistemic rationality; for instance, religions that oppose birth control survive very successfully just due to reproduction rates. But in the long run, I expect that survival will require more and more epistemic rationality due to competition with other smart agents.

In the same way as evolution favors correct factual beliefs in the long run, it presumably also favors certain moral attitudes. As a simple case, ideologies demanding celibacy from all followers (a la the Shakers) are unlikely to survive, and similar fates probably await antinatalist movements. Likewise, moral views that repugn strategic thinking may ultimately lose out to those that embrace it.

By "strategic thinking" I don't mean naive Machiavellian deviousness; that historically has lost out to behaviors more like virtue ethics in human evolution. I don't expect a morality that endorses bank robbing for the greater good to win the future. But I do mean that moralities that fail to adaptively develop intelligent survival strategies risk becoming stale and less competitive. This doesn't imply that the morality itself has to endorse a particular survival-maximizing content. But the morality may need to have a strategic shell to enclose its inner seeds if it is to survive in the long run.

If we wanted to be charitable to adherents of moral realism, we could suggest the requirement that agents of the future behave strategically as one candidate feature of objective morality. Just as evolution constrains survivors to have certain factual beliefs, so too competitive struggles may push agents toward particular behaviors -- at least game-theoretic rationality if nothing else more specific. Beyond that, it's not clear if selection pressure strongly favors particular ideologies ex ante. So I expect this idea does little justice to what disciples of moral realism were hoping for.

Caveats

Many speculations in this piece are just-so stories, at least until further evidence comes in. In addition, many of these ideas are probably unoriginal, though if other authors have said the same things, I don't know the citations. Most of what I wrote is common cultural knowledge among my friends.

Appendix: Ethical reasoning using associative networks

Our brains use associations to make connections between concepts. For instance, if I say "ancient Egypt," you might think of "pyramids," "Nile River," and "hieroglyphs." Likewise, if I had first said "hieroglyphs," it likely would have brought to mind the general concept of ancient Egypt. Our brains have a bidirectionally associative network among these ideas.

Moral disagreements

We can imagine adding a valence to various concepts, as well as associational weights between them. The weights correspond to how numerous and strong are the neural connections in our brains.

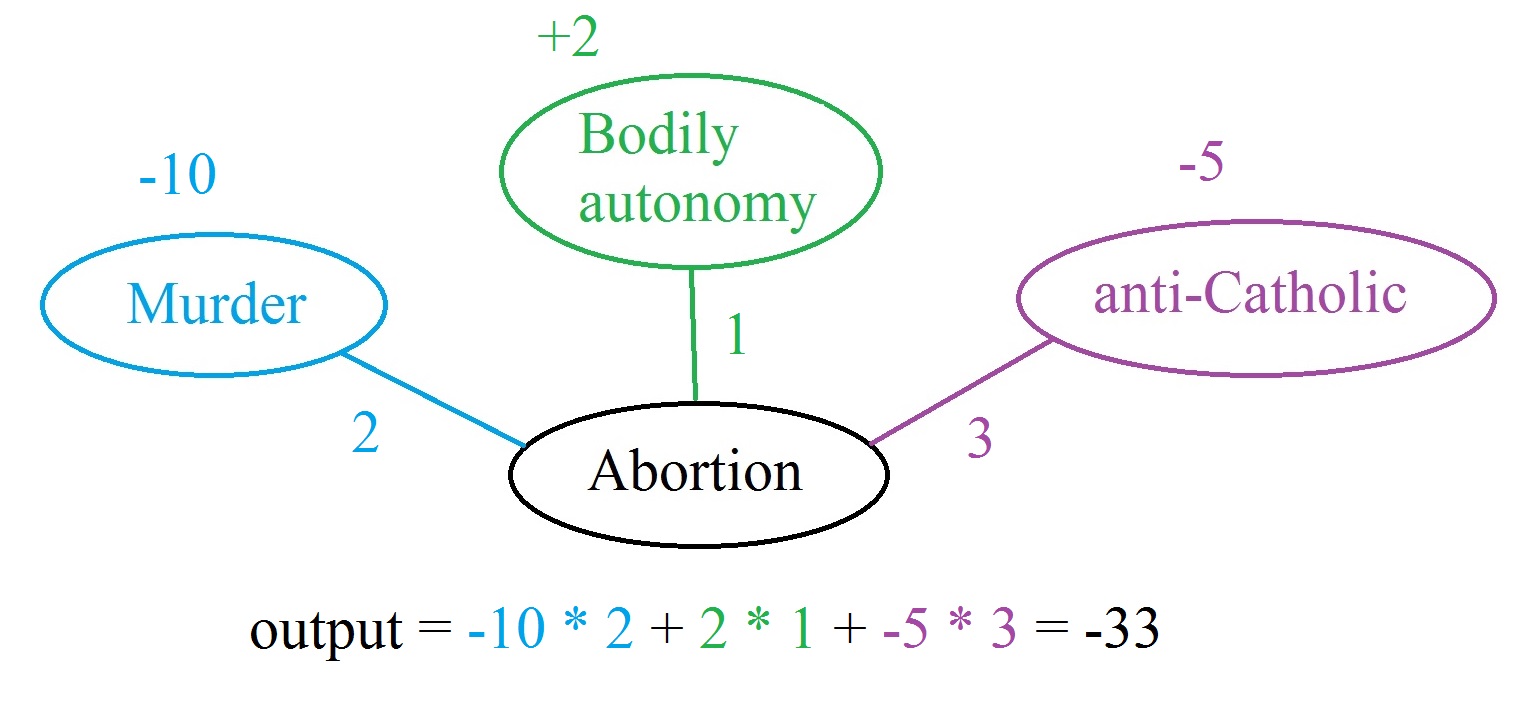

Take a moral issue like abortion. Consider a pro-life advocate who believes that abortion is murder and cares about the views of the Catholic Church. She does recognize that bodily autonomy is somewhat important, but this consideration is outweighed by the murder and anti-Catholic associations that abortion has for her. We can represent this collection of sentiments in a network like the following:

The output score on "Abortion" is strongly negative.

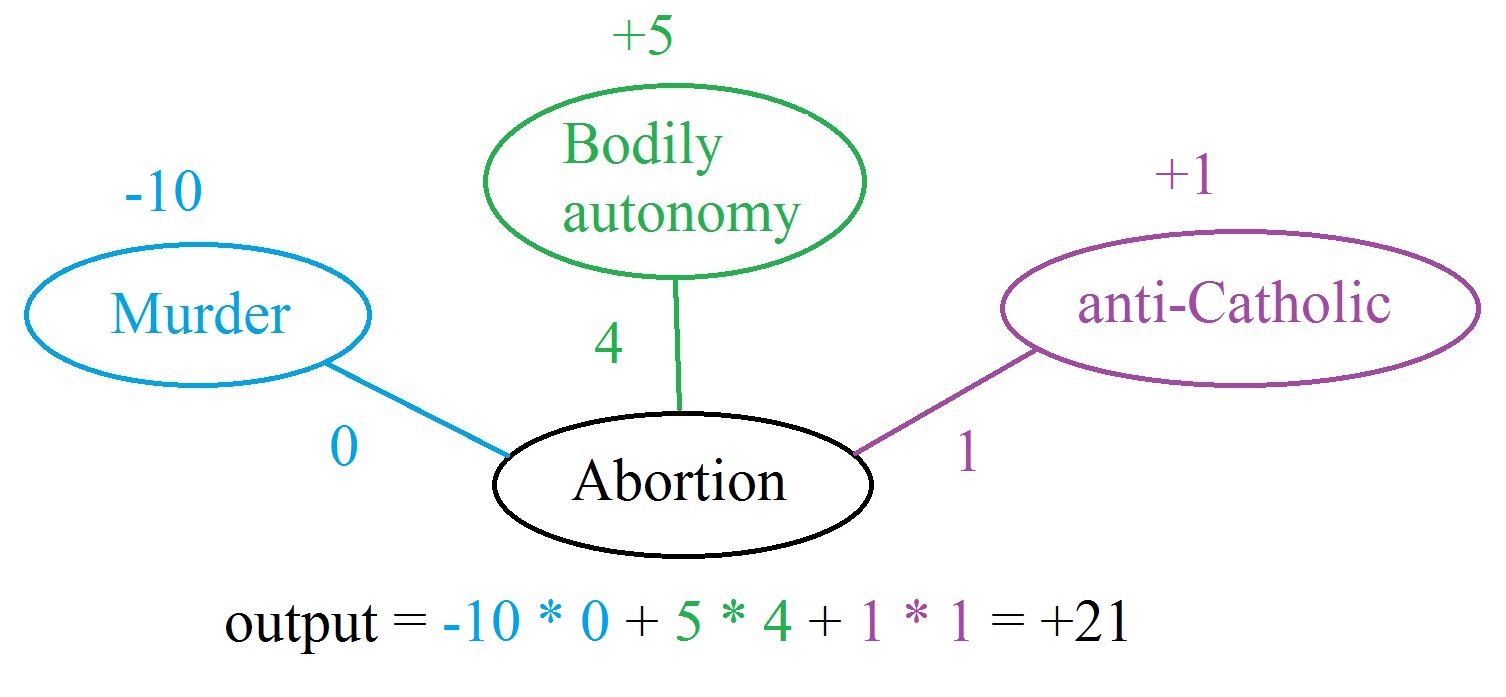

A different person might have the same network but different valences and weights:

This person doesn't believe abortion is murder. He also values bodily autonomy more heavily. And unlike the previous case, this person dislikes the Catholic Church and so sees abortion's opposition to Catholic doctrine as a small positive argument for it. Thus, we can see how two people come to different conclusions because their neural wiring is different, due to divergent genes and life experiences.

Networks encode syllogisms

The product of valence times connection weight does the work of a logical syllogism:

- Murder is wrong. (valence = -10)

- Abortion is murder. (weight = 2 in the first case)

- Therefore, abortion is wrong. (valence * weight = -20)

Because these numbers have continuous values and we can sum up multiple conflicting considerations, the network approach is more powerful and descriptive of how brains actually work than the syllogistic approach. Also note that for the person who did not believe abortion was murder, the syllogism would not go through because his valence * weight evaluated to 0.

Actions influencing beliefs

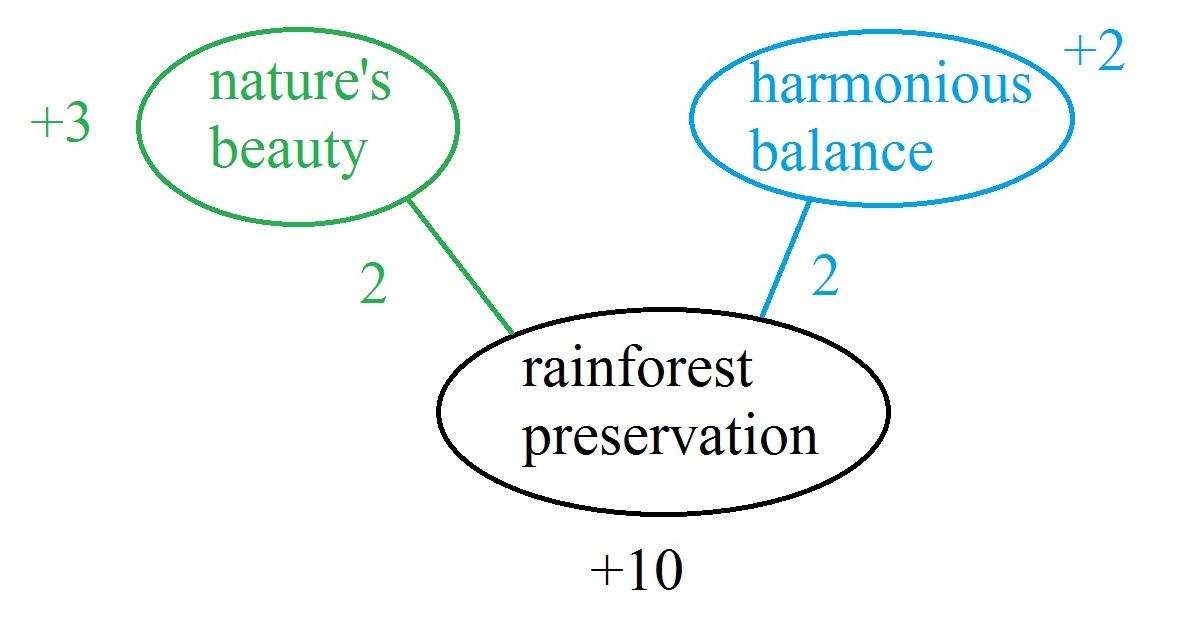

Because the networks are bidirectional, nodes at the bottom may influence those at the top. For instance, suppose someone naively starts out with this network:

But then the person realizes, "Wait, I eat meat, and it tastes good. I don't want to give it up. But I'm also not a bad person, and I can't let the output node evaluate negative. Hmm..." This is cognitive dissonance. One solution is to amend the weights and valences to make the problem go away. For instance, maybe the person would end up with this:

Here the defensive omnivore has also adduced additional connections to make sure the final output evaluates to being positive. This illustrates the process by which actions influence beliefs rather than beliefs always determining actions.

Reflective equilibrium

Associative networks can also limn the process of reflective equilibrium, which is basically the resolution of cognitive dissonance. For example, suppose a nature lover starts out with this network:

Then this person is presented with the facts that nature is filled with immense suffering and that this suffering is unfair:

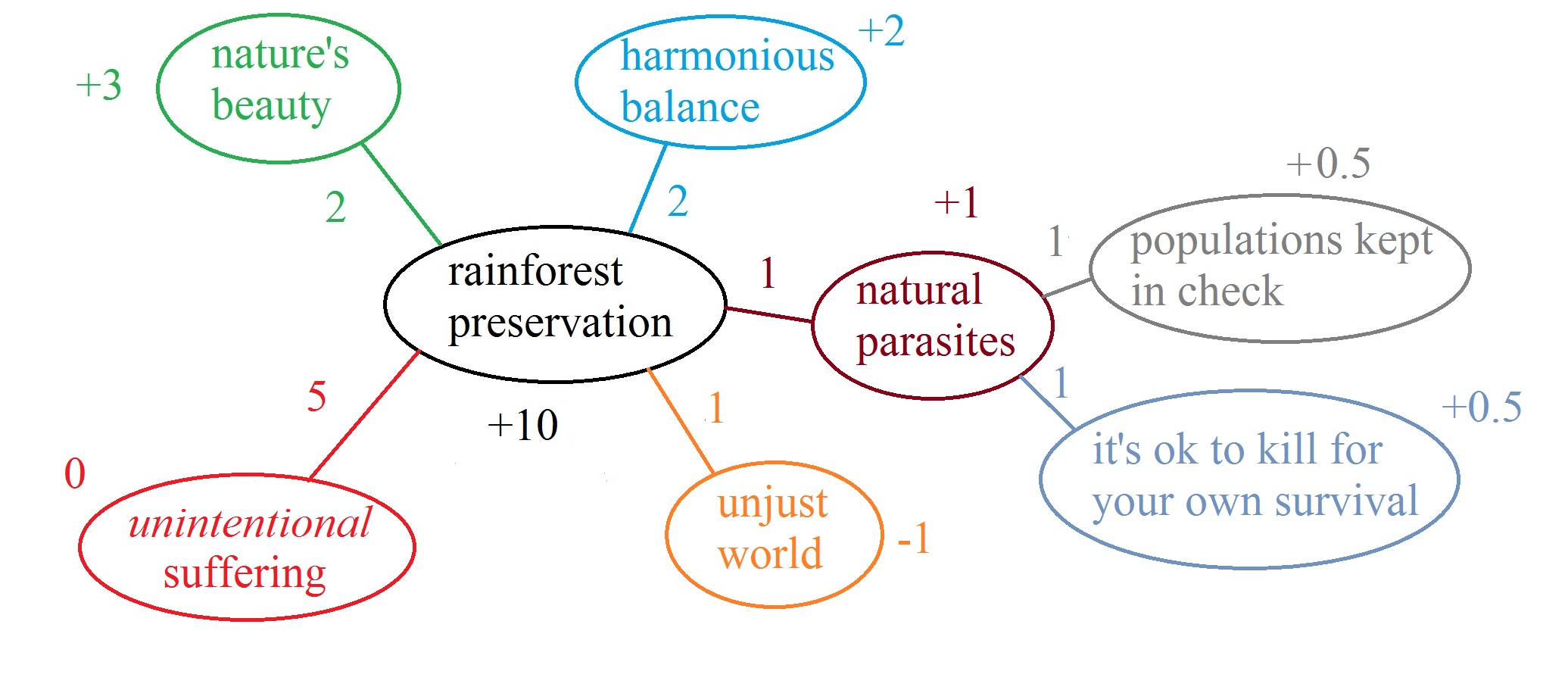

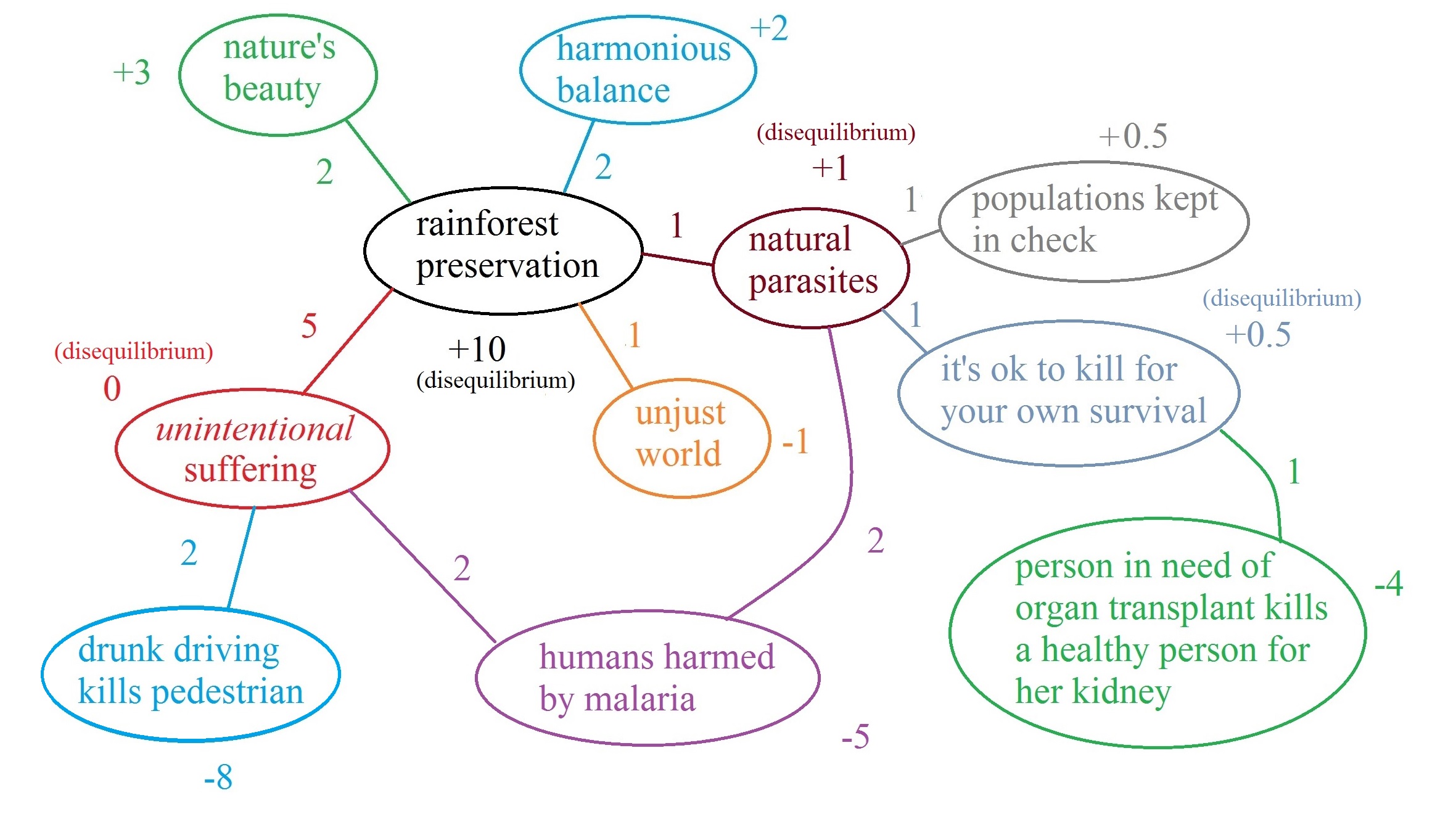

Now the conservationist is in reflective disequilibrium because a value of +10 for the central node is inconsistent with the weighted sum of its surrounding nodes. The conservationist might adjust by changing some of the connections:

Here the conservationist has replaced "immense suffering" with "unintentional suffering," which she has decided is not bad. She has also downweighted the significance of nature's injustice, and she has added a node defending parasitism because of its virtues. The result is that she has returned to a value of +10 for rainforest conservation.

Now imagine that the conservationist hears counterarguments to her changed valences. For instance, if parasites are good, why is malarial infection of humans bad? If unintentional suffering is okay, why is it wrong when a drunk driver accidentally kills a pedestrian? And so on. Adding these nodes throws the network into disequilibrium once more, such that the old valences are no longer the weighted sums of their inputs:

These pictures resemble argument maps.

Neural-network properties

I'm still a little unclear on how this network model should work. The representation so far is redolent of a non-binary version of Hopfield nets, and the process of making a philosophical argument would correspond to updating a node's weights based on the values of other nodes in its surroundings. However, the network can't be literally bidirectional because, for example, in the abortion diagrams, it's not the case that once the "Abortion" node is updated, the "Bodily autonomy" node should update to exactly its same score just because it's the only node connected to "Abortion." Maybe the fact that in practice all these nodes would have many other connections to other nodes would fix this problem. Or maybe the network weights are different depending on the direction. Someone with more neural-network experience could probably patch up this model.

I don't claim that this network model is a fully accurate depiction of human moral reasoning, and it's maybe not the only type of moral reasoning that humans do. Still, the framework is suggestive and helps conceptualize how we see people thinking and arguing. For example, the noncentral fallacy involves attempting to strengthen connection weights between an atypical instance of a hated concept and the hated concept itself, in order to propagate negative valence from the hated concept to the atypical instance. And choosing words to evoke desired responses is well known to marketers, political strategists, and anyone who has employed oblique language to avoid saying something unpleasant outright.

In passing, we can reflect on what these networks have to say about metaethics. For one, they explain how non-cognitivism still allows ethical statements to obey syllogistic inference rules, despite what critics of emotivism sometimes allege.2 They also suggest a sort of coherentist brand of moral truth, because the network activations can propagate both ways. Of course, we could capture foundationalism instead if we made the networks feedforward, but I think a recurrent network better captures the fact that people seem to have no single axiom set but are typically willing to change any given view when it conflicts too strongly with other views.

The moral valence of a statement is not necessarily the same as its hedonic valence. Indeed, sometimes the two may be opposed, like in the case where you know "I should leave that ice cream for my friend," but your hedonic system says "I want to eat my friend's ice cream." Still, it's possible that moral valence could be represented by a separate system that shares similarities to the hedonic-valence system.

Visceral moral feelings could involve a lot of different emotions. For example

- murder evokes anger and horror

- incest evokes disgust

- saluting the flag evokes loyalty

- seeing starving children evokes sadness

- etc.

So presumably if we could understand each of those emotions, we could understand their moral instantiations. When people say "abortion is murder," they're aiming to build neural connections from the "abortion" concept to the "murder" concept, so that hearing "abortion" will trigger the anger and horror normally associated with murder. The murder concept itself may just be directly hooked up to whatever systems trigger the emotions of anger and horror. These connections in turn may have been built by cultural teachings combined with experienced unpleasantness when thinking about or watching murders. ↩

Footnotes

- Yudkowsky (2004) addresses this point (p. 17):

A CEV might survey the “space” of initial dynamics and self-consistent final dynamics, looking to see if one alternative obviously stands out as best; extrapolating the opinions humane philosophers might have of that space. But if there are multiple, self-consistent, satisficing endpoints, each of them optimal under their own criterion—okay. Whatever. As long as we end up in a Nice Place to Live.

- The anti-emotivist argument can be seen as misguided even more simply. Consider the following syllogism:

- Harry Potter's father was killed by Voldemort.

- James Potter is Harry Potter's father.

- Therefore, James Potter was killed by Voldemort.

We can make sense of this reasoning even though the statements are false in the real world. (back)